RESEARCH AREAS

- Computer vision and deep learning:

- Object detection, recognition and tracking using deep learning.

- Semantic segmentation and action recognition using deep learning.

- Depth map estimation and processing using deep learning.

- Multi-cue pedestrian detection and tracking.

- Image/video processing and communication:

- Low-complexity, error-resilient video coding.

- Compressed video sensing.

- Multi-view video coding, processing and transmission.

- Computer vision for robotics:

- Real-tome 3D reconstruction using RGB-D.

- RGB-D simultaneous localization and mapping.

- Large-scale 3D scene modeling.

RESEARCH FUNDING

- Korea Electronics Technology Institute (KETI), "Hardware/software co-design of low-complexity video coding algorithms for wireless video surveillance," 2011-2012

- Memsoft, "Power-optimized distributed video codec design for wireless video sensor networks," 2012-2014

- Korea Electronics Technology Institute (KETI), "Stereo-based pedestrian detection and tracking algorithms for advanced driver assistant systems," 2012-2017

- Argonne National Laboratory, "Enhancement of 3D model reconstruction technology," 2012-2017

- Korea Electronics Technology Institute (KETI), "Camera-based artificial intelligence system for autonomous cars", 2017-2021

- Korea Electronics Technology Institute (KETI), "Software and hardware development of cooperative autonomous driving control platform for commercial special and work-assist vehicles", 2022-2026

- HL Mando, "Developing multimodal AI models and embedded AI controller for road surface condition prediction", 2024-2028

RESEARCH PROJECTS

- EMSFomer: Efficient Multi-Scale Transformer for Real-Time Semantic Segmentation

- Efficient Multi-Task Training with Adaptive Feature Alignment for Universal Image Segmentation

- BEVFusion With Dual Hard Instance Probing for Multimodal 3D Object Detection

- Enhancing query formulation for universal image segmentation

- Enhancing Semantically Masked Transformer with Local Attention for Semantic Segmentation

- Voxel Transformer with Density-Aware Deformable Attention for 3D Object Detection

- Enhancing mask Transformer with auxiliary convolution layers for semantic segmentation

- Video object detection using event-aware convolutional LSTM and object relation networks

- Object detection with location-aware deformable convolution and backward attention filtering

- Modeling long- and short-term temporal context for video object detection

- Improving object detection using weakly-annotated multi-label segmentation

- Video object detection with two-path convolutional LSTM pyramid

- Mixed spatial pyramid pooling for semantic segmentation

Transformer-based models have achieved impressive performance in semantic segmentation in recent years. However, the multi-head self-attention mechanism in Transformers incurs significant computational overhead and becomes impractical for real-time applications due to its high complexity and large latency. In this paper, we propose an efficient multi-scale Transformer (EMSFormer) that employs learnable keys and values based on the single-head attention mechanism and a dual-resolution structure for real-time semantic segmentation. Experimental results show that our proposed method can achieve state-of-the-art performance with real-time inference speed on ADE20K, Cityscapes, and CamVid datasets.

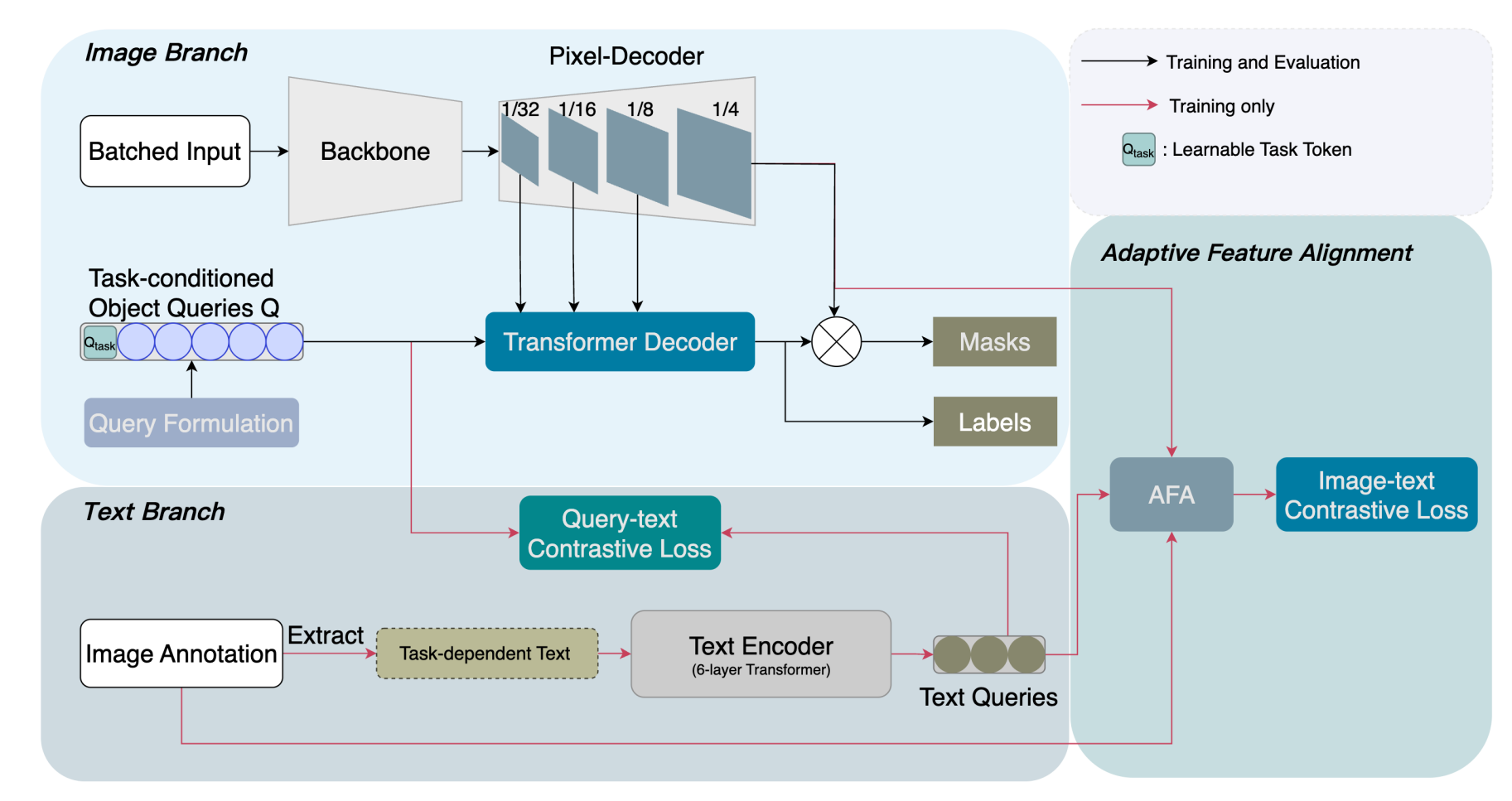

Universal image segmentation aims to handle all segmentation tasks within a single model architecture and ideally requires only one training phase. Existing approaches generate the task token from a text input (e.g., "the task is panoptic"). However, such text-based inputs merely serve as labels and fail to capture the inherent differences between tasks. The prevailing modality-alignment methods rely on large-scale uni-modal encoders for both modalities and an extremely large amount of paired data for training, and therefore it is hard to apply these existing models to lightweight segmentation models and resource-constrained devices. In this work, we propose Adaptive Feature Alignment (AFA) integrated with a learnable task token to address these issues. The learnable task token automatically captures inter-task differences from both image features and text queries during training, providing a more effective and efficient solution than a predefined text-based token.

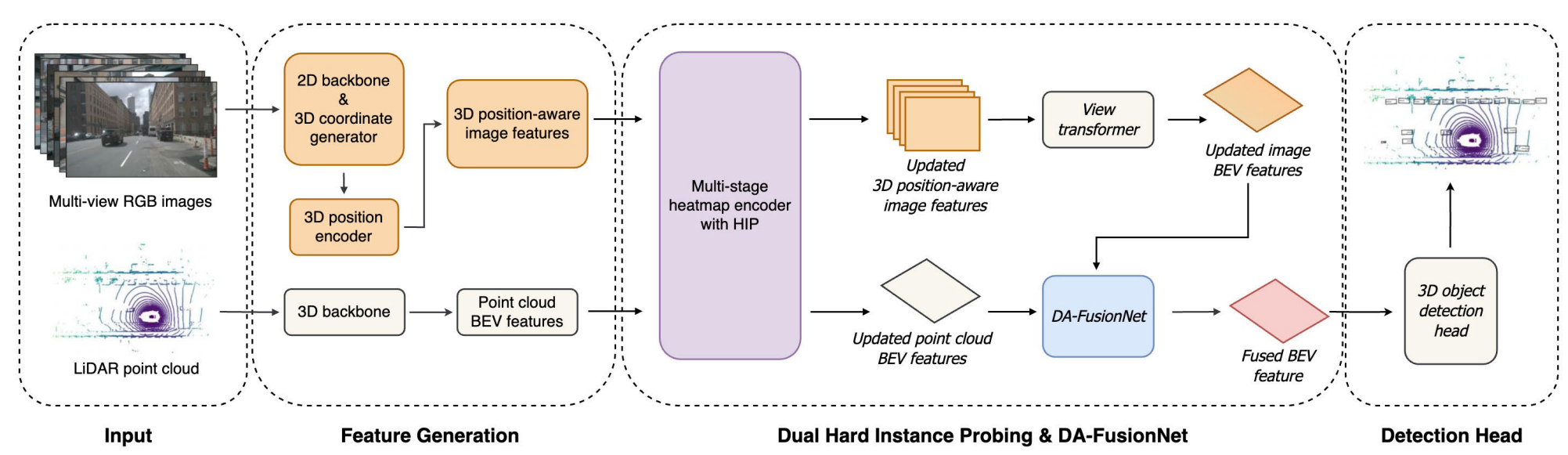

Recent multi-modal fusion methods have been proposed to enhance 3D object detection by combining the geometric accuracy of LiDAR point clouds with the rich semantic features of camera images. However, few methods explicitly address false negatives, and many fail to effectively align and interact multimodal features during the fusion process. To address these challenges, we propose BEVFusion with Dual Hard Instance Probing (BEVFusion-DHIP), a novel 3D object detection framework designed to systematically reduce false negatives. BEVFusion-DHIP incorporates Hard Instance Probing (HIP) into both LiDAR BEV features and 3D position-aware image features, progressively refining the detection of challenging objects across multiple stages. Experimental results on the nuScenes dataset show that the proposed BEVFusion-DHIP outperforms state-of-the-art lidar and camera+lidar based 3D object detection models.

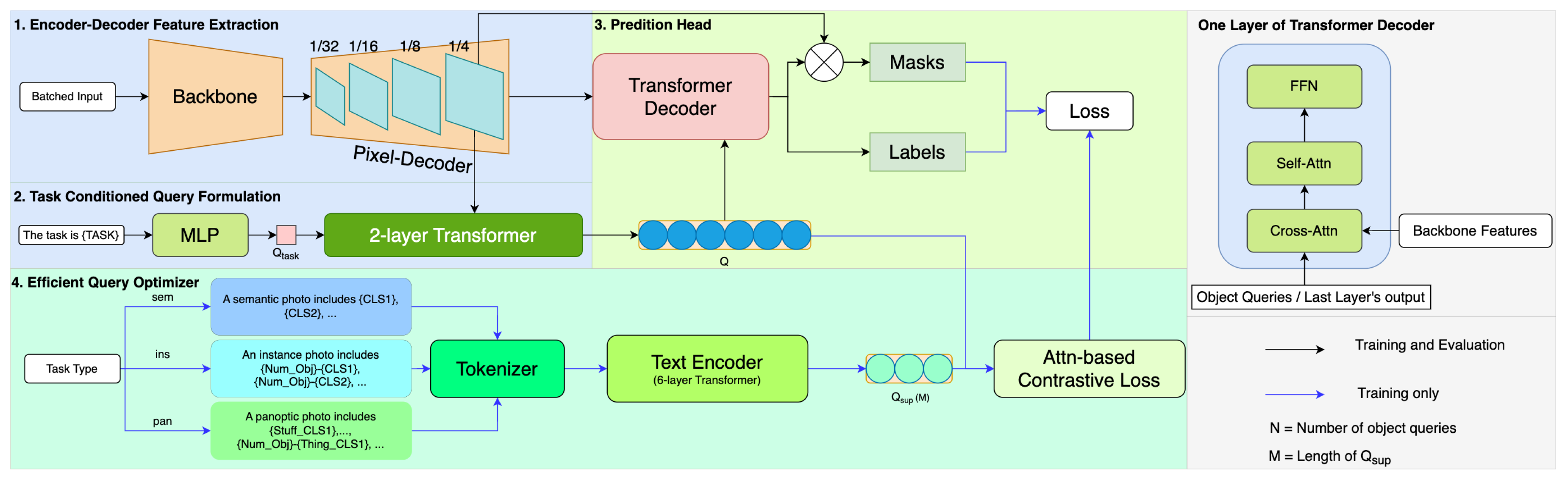

Recent advancements in image segmentation have been notably driven by Vision Transformers. Despite their effectiveness, the pursuit of enhanced capabilities often leads to more intricate architectures and greater computational demands. OneFormer has responded to these challenges by introducing a query-text contrastive learning strategy active during training only. However, this approach has not completely addressed the inefficiency issues in text generation and the contrastive loss computation. To solve these problems, we introduce Efficient Query Optimizer (EQO), an approach that efficiently utilizes multi-modal data to refine query optimization in image segmentation. Our strategy significantly reduces the complexity of parameters and computations by distilling inter-class and inter-task information from an image into a single template sentence. Beyond merely reducing complexity, our model demonstrates superior performance compared to OneFormer across all three segmentation tasks using the Swin-T backbone.

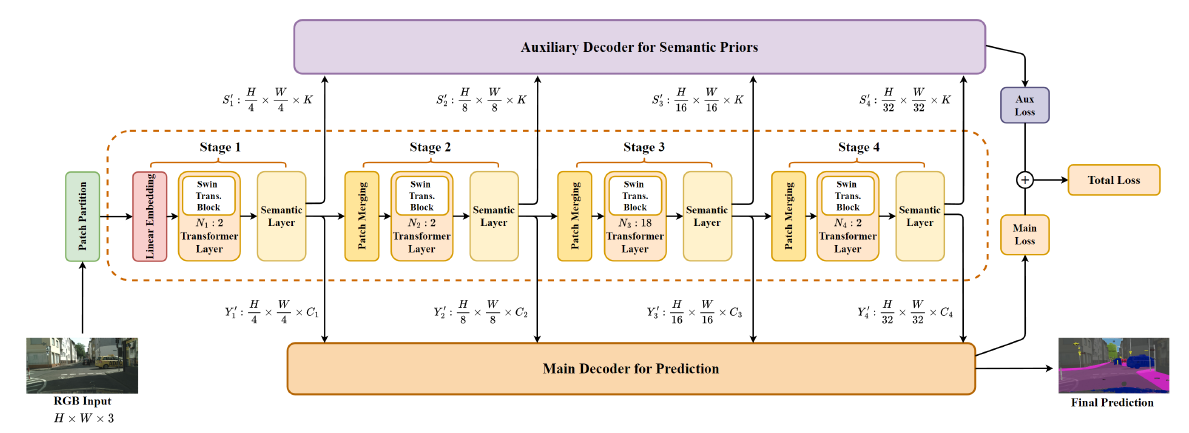

In recent years, the application of Transformer-based semantic segmentation across various visual recognition tasks has yielded remarkable results. However, existing methods often adopt a pretrained backbone that is finetuned for semantic segmentation, resulting in inefficiencies in capturing semantic contextual information during the encoding stage. This limitation adversely affects segmentation performance, leading to suboptimal results. SeMask attempts to tackle this issue by introducing a semantic attention operation during the encoding stage to incorporate image-wide semantic contextual information and enhance segmentation performance. Despite its merits, SeMask relies entirely on Transformer attention mechanisms, which poses challenges in fully exploiting local details crucial for accurate segmentation. To overcome this limitation, our approach introduces a novel semantic layer into the encoder side of a Transformer-based segmentation model. This semantic layer utilizes depthwise convolutions with various kernel sizes to capture multiscale local details. Integrated at different stages of a hierarchical Transformer backbone, it facilitates the acquisition of multiscale semantic contextual information during encoding. This augmentation significantly improves overall segmentation performance, particularly for the more precise segmentation of small objects.

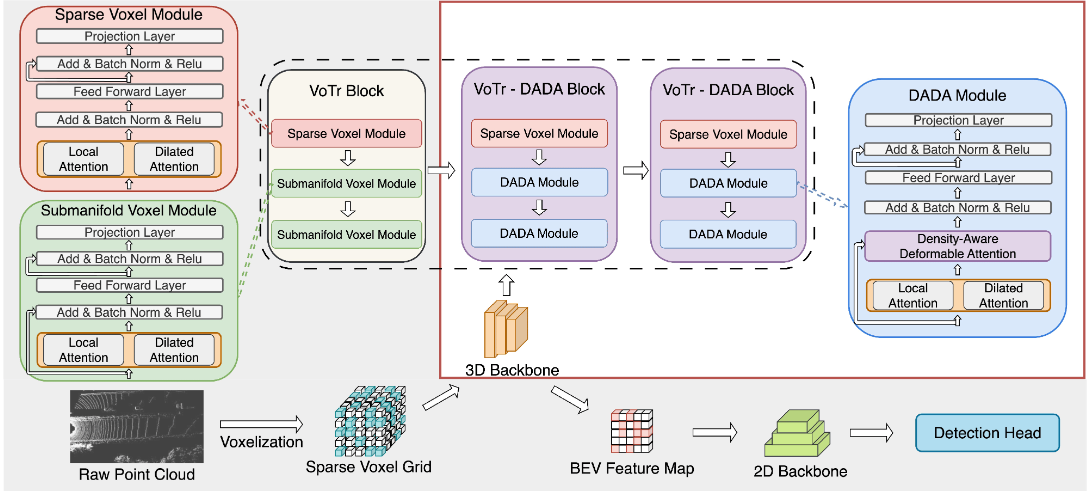

The Voxel Transformer (VoTr) is a prominent model in the field of 3D object detection, employing a transformer-based architecture to comprehend long-range voxel relationships through self-attention. However, despite its expanded receptive field, VoTr's flexibility is constrained by its predefined receptive field. To this end, we present a Voxel Transformer with Density-Aware Deformable Attention (VoTr-DADA), a novel approach to 3D object detection. VoTr-DADA leverages density-guided deformable attention for a more adaptable receptive field. It efficiently identifies critical areas in the input using density features, combining the strengths of both VoTr and Deformable Attention. We introduce the Density-Aware Deformable Attention (DADA) module, specifically designed to focus on these crucial areas while adaptively extracting more informative features. Experimental results show that our proposed method outperforms our baseline method VoTr while maintaining a fast inference speed.

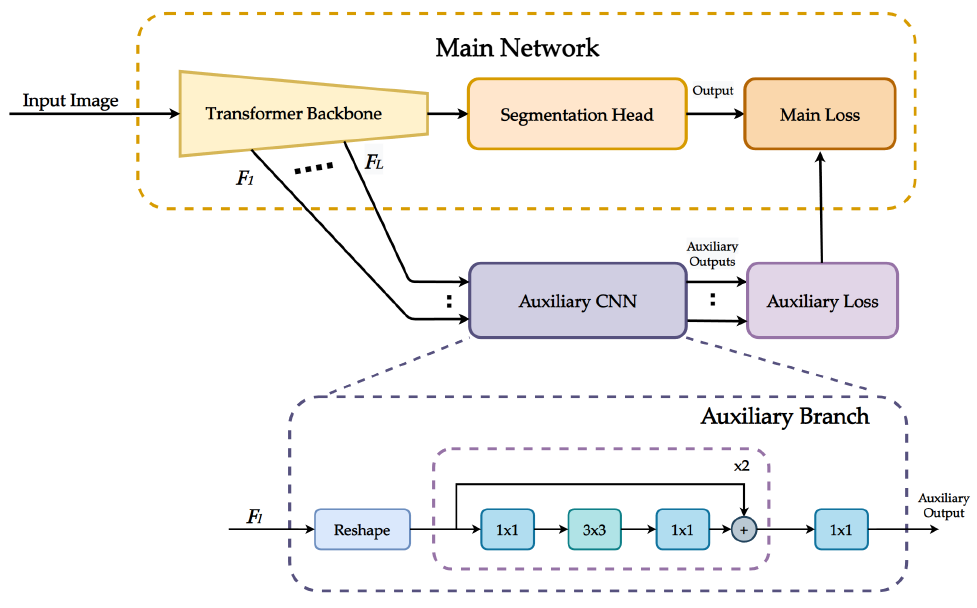

In recent years, semantic segmentation methods based on transformers have demonstrated remarkable performance. Among them, Mask2Former is a prominent transformer-based approach consolidating general image segmentation into a unified model. Despite its effectiveness, Mask2Former encounters challenges in effectively capturing local features and accurately segmenting small objects due to its heavy reliance on transformers. To this end, we propose a simple yet effective method by introducing auxiliary branches to Mask2Former. These additional features enhance the model's capability to learn local information and improve small object segmentation. The introduced auxiliary convolution layers are exclusively required during the training phase. They can be seamlessly omitted during inference, ensuring that the performance boost comes without additional computational costs during the inference stage.

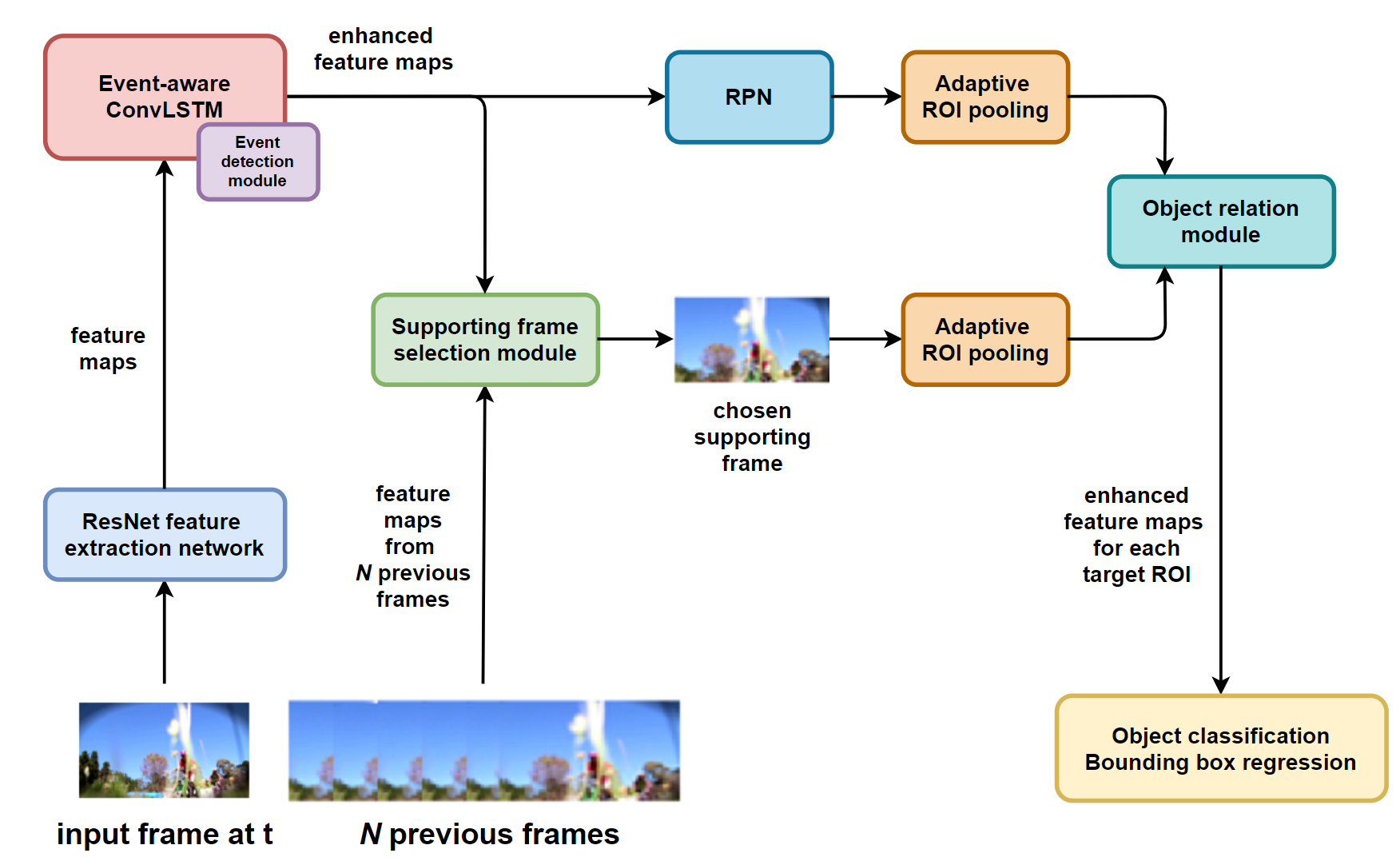

Common video-based object detectors exploit temporal contextual information to improve the performance of object detection. However, detecting objects under challenging conditions has not been thoroughly studied yet. We focus on improving the detection performance for challenging events such as aspect ratio change, occlusion, or large motion. To this end, we propose a video object detection network using event-aware ConvLSTM and object relation networks. Our proposed event-aware ConvLSTM is able to highlight the area where those challenging events take place. Compared with traditional ConvLSTM, the proposed method is easier to exploit temporal contextual information to support video-based object detectors under challenging events. To further improve the detection performance, an object relation module using supporting frame selection is applied to enhance the pooled features for target ROI. It effectively selects the features of the same object from one of the reference frames rather than all of them.

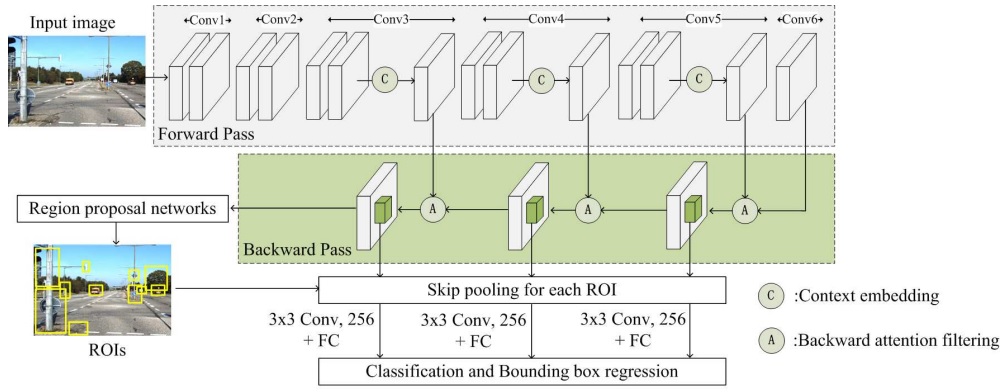

Multi-class and multi-scale object detection for autonomous driving is challenging because of the high variation in object scales and the cluttered background in complex street scenes. Context information and high-resolution features are the keys to achieve a good performance in multi-scale object detection. However, context information is typically unevenly distributed, and the high-resolution feature map also contains distractive low-level features. Therefore, we propose a location-aware deformable convolution and a backward attention filtering to improve the detection performance. The location-aware deformable convolution extracts the unevenly distributed context features by sampling the input from where informative context exists. Different from the original deformable convolution, we apply an individual convolutional layer on each input sampling grid location to obtain a wide and unique receptive field for a better offset estimation. Meanwhile, the backward attention filtering module filters the high-resolution feature map by highlighting the informative features and suppressing the distractive features using the semantic features from the deep layers.

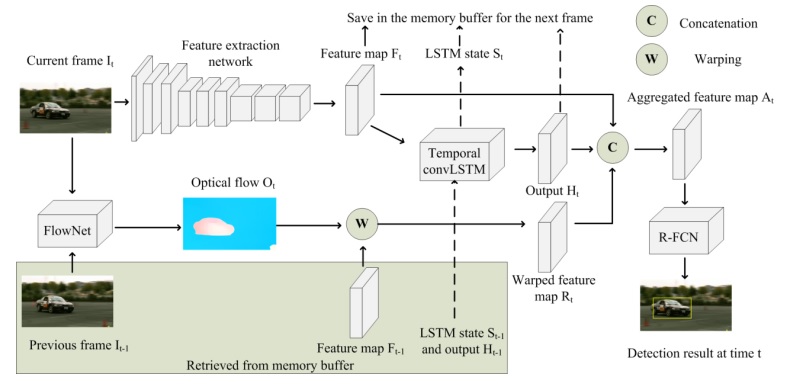

Video object detection enhances the performance of the still-image based object detection by exploiting temporal context information from neighboring frames. Most of the state-of-the-art video object detectors are non-causal and require lots of preceding and succeeding frames, which makes them impractical for real-time online detection where succeeding frames are not available. We propose a causal recurrent flow-based method for online video object detection. The proposed method reads only the current frame and one preceding frame from memory buffer at each time step. Two types of temporal context information are utilized. The short-term temporal context information is utilized by warping the feature map from the nearby preceding frame based on the optical flow. The long-term temporal context information is extracted from the temporal convolutional LSTM, where informative features from distant preceding frames are stored and propagated through the time.

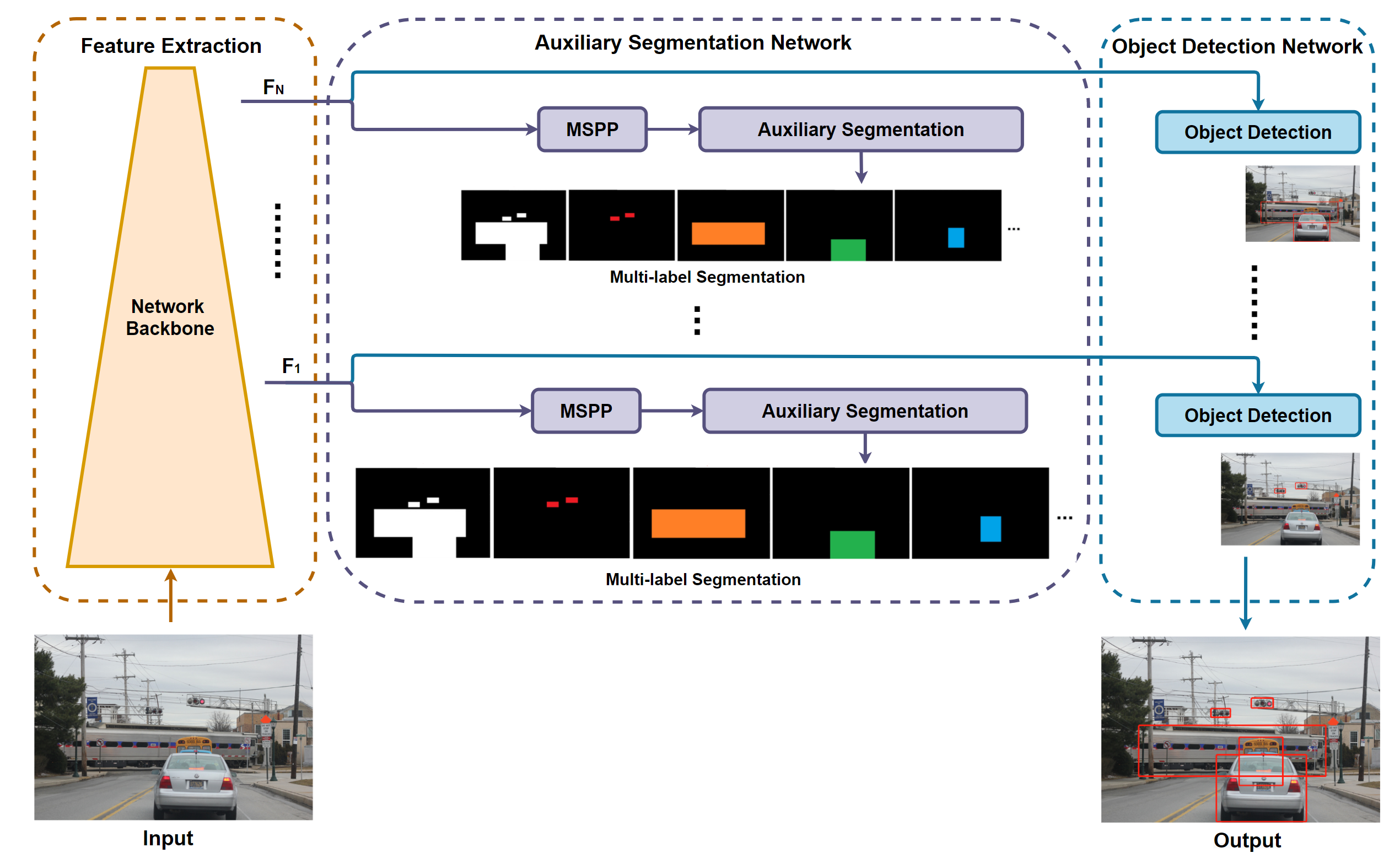

With the rapid development of deep learning techniques, the performance of object detection has increased significantly. Recently, several approaches on joint learning of object detection and semantic segmentation have been proposed to exploit the complementary benefits of the two highly correlated tasks. We propose a weakly-annotated auxiliary multi-label segmentation network that boosts object detection performance without additional computational cost at inference. The proposed auxiliary segmentation network is trained using weakly-annotated dataset and therefore does not require expensive pixel-level annotations for training. Different from the previous approaches, we use multi-label segmentation to jointly supervise auxiliary segmentation and object detection for better occlusion handling. The proposed method can be integrated with any one-stage object detector such as RetinaNet, YOLOv3, YOLOv4, or SSD.

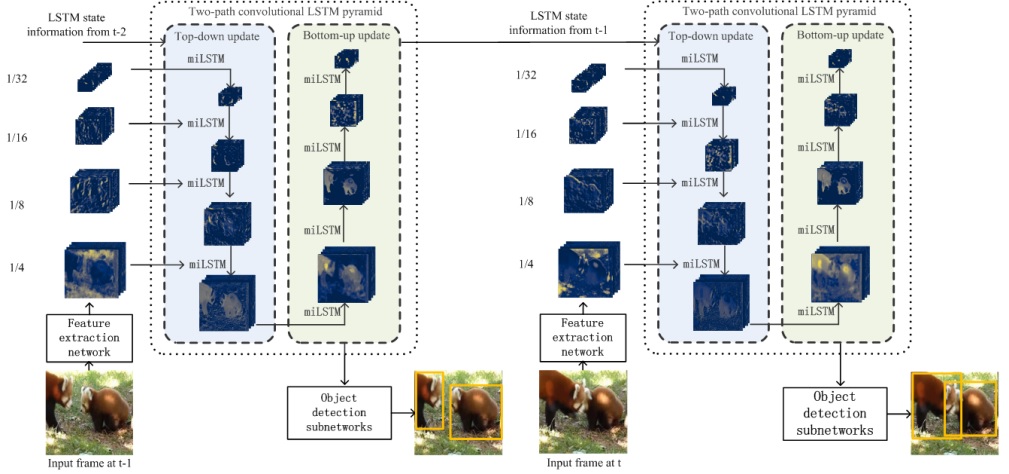

One of the major challenges in video object detection is drastic scale changes of objects due to camera motion. To this end, we propose a two-path Convolutional Long Short-Term Memory (convLSTM) pyramid network designed to extract and convey multi-scale temporal contextual information in order to handle object scale changes efficiently. The proposed two-path convLSTM pyramid consists of a stack of multi-input convLSTM modules. It is updated in top-down and bottom-up pathways so that the temporal contextual information for small-to-large and large-to-small scale changes is exploited. The proposed multi-input convLSTM module uses two input feature maps of different resolutions to store and exchange temporal contextual information of different scales between neighboring convLSTM modules. The outputs of the proposed convLSTM pyramid network constitute a feature pyramid where each feature map contains multi-scale temporal contextual information from earlier frames. The proposed convLSTM pyramid can be combined with various still-image object detectors to improve the performance of video object detection.

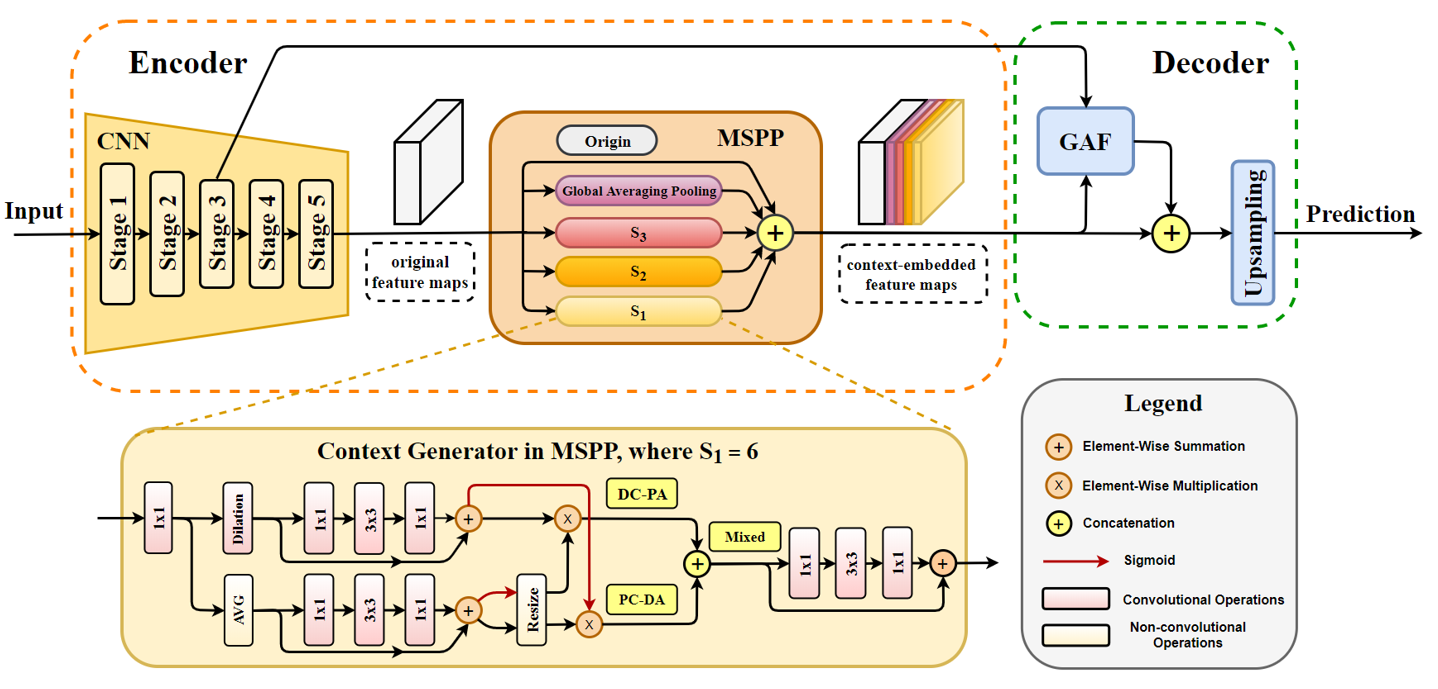

Semantic segmentation is a challenging task as each pixel should be labeled accurately in the image. To improve the performance of semantic segmentation, some Fully Convolutional Network (FCN) based semantic segmentation methods adopt a spatial pyramid pooling structure to enrich contextual information. Others employ an encoder-decoder architecture to recover object details gradually. We propose a semantic segmentation framework which combines the benefits of these approaches. Specifically, we propose a Mixed Spatial Pyramid Pooling (MSPP) module based on region-based average pooling and dilated convolution to obtain dense multi-level contextual priors. To further refine the details of objects more effectively, we also propose a Global-Attention Fusion (GAF) module to provide global context as guidance for low-level features. Our proposed method achieves mIoU of 84.1% on PASCAL VOC 2012 dataset and 80.4% on Cityscapes dataset without using any post-processing or additional datasets for pretrained model.

PAST RESEARCH PROJECTS

- Deep learning based object detection for autonomous driving

- Stereo-based pedestrian detection

- Real-time 3D reconstruction using RGB-D

- Distributed video coding (DVC).

- Multiple description coding in DVC.

- Multiview video coding.

- Depth extraction from a pair of stereoscopic images.

- Multiview video coding focusing on compression of depth maps, layered depth images and layered depth videos.

- View synthesis at arbitrary camera locations using concepts of depth image based rendering.

- Video anomaly detection.

- Network-aware and error-resilient video coding

- Wireless multimedia communication

- Cross-layer design for video transmission over wireless ad-hoc networks

Based on the popular two-stage detector (Faster R-CNN) and one-stage detector (SSD), our research focuses on improving the detection accuracy and the processing speed using spatial and temporal context information. The spatial context information extraction is carried out using spatial recurrent neural network and location-aware deformable convolution. Short-term and long-term temporal context information is modeled by optical flow and convolutional LSTM.

Our research speeds up the detection process by utilizing stereo camera and depth information to detect the ground plane where pedestrians would normally stand upon. Thus, the ROI search range is greatly reduced, and the detection can be performed at real-time speed. Our research also features depth map estimation (stereo matching) based on modified Census transform and mutual information which is fast and robust against the brightness inconsistency in the outdoor environment. The pedestrian detection system is implemented on the low-profile mini-PC and tested in the Chicago urban area.

3D reconstruction like KinectFusion has been a well-established area of study in the field of robotics and computer vision. The objective is to recreate a real-world scene, and it has applications in areas like augmented reality (AR), robotic teleoperation, medical analysis, video games, etc. We focus on improving the performance of 3D reconstruction for challenging tasks such as moving object segmentation, tracking fast camera motion, modeling complex environments, etc.

DVC is commonly referred to as the reverse paradigm of conventional video coding where the complexity is transferred from the encoder to the decoder. The target applications are video surveillance cameras, video conferencing and remote video sensors which need to perform video encoding on resource constrained devices.

Multiple description coding in DVC is a an effort to reduce the effects of channel losses in DVC By intentionally introducing redundancy into the encoded bitstreams, the ability of the codec to withstand channel losses are increased.

Multiview video coding (MVC) is a hot research topic these owing to the advances in processing power and algorithms to process multiview video content. The lab focuses on all aspects of MVC starting from

Anomaly detection in video is a very important topic that the lab is conducting research into. Using concepts from machine learning, pattern recognition and stochastic processes, research is being conducted to extract useful features from video. Such research will be very useful to law enforcement authorities for automatic detection of anomalies in surveillance cameras distributed around a city.

Research is being conducted into improvement of H.264/AVC, joint source-channel coding, rate control and error control, error concealment, video distortion estimation and quality assessment.

Quality of Service provision for video transmission in wireless networks, multi-path transport over wireless ad-hoc networks, multimedia transport protocols.

Application-layer techniques for adaptive video transmission over wireless networks, adaptive cross-layer error protection, adaptive link-layer techniques, power-distortion optimized routing and scheduling.